| 26 July 2013 |

- Bug 22136 - Added text for Inband SPS/PPS support.

- Bug 22776 - Clarified that implementations are only required to support one SourceBuffer configuration at a time.

|

| 18 July 2013 |

- Bug 22117 - Reword byte stream specs in terms of UA behavior.

- Bug 22148 - Replace VideoPlaybackQuality.playbackJitter with VideoPlaybackQuality.totalFrameDelay.

|

| 02 July 2013 |

- Bug 22401 - Fix typo

- Bug 22134 - Clarify byte stream format enforcement.

- Bug 22431 - Convert videoPlaybackQuality attribute to getVideoPlaybackQuality() method.

- Bug 22109 - Renamed 'coded frame sequence' to 'coded frame group' to avoid confusion around multiple 'sequence' concepts.

|

| 05 June 2013 |

- Bug 22139 - Added a note clarifying that byte stream specs aren't defining new storage formats.

- Bug 22148 - Added playbackJitter metric.

- Bug 22134 - Added minimal number of SourceBuffers requirements.

- Bug 22115 - Make algorithm abort text consistent.

- Bug 22113 - Address typos.

- Bug 22065 - Fix infinite loop in coded frame processing algorithm.

|

| 01 June 2013 |

- Bug 21431 - Updated coded frame processing algorithm for text splicing.

- Bug 22035 - Update addtrack and removetrack event firing text to match HTML5 language.

- Bug 22111 - Remove useless playback sentence from end of stream algorithm.

- Bug 22052 - Add corrupted frame metric.

- Bug 22062 - Added links for filing bugs.

- Bug 22125 - Add "ended" to "open" transition to remove().

- Bug 22143 - Move HTMLMediaElement.playbackQuality to HTMLVideoElement.videoPlaybackQuality.

|

| 13 May 2013 |

- Bug 21954 - Add [EnforceRange] to appendStream's maxSize parameter.

- Bug 21953 - Add NaN handling to appendWindowEnd setter algorithm.

- Alphabetize definitions section.

- Changed endOfStream('decode') references to make it clear that JavaScript can't intercept these calls.

- Fix links for all types in the IDL that are defined in external specifications.

|

| 06 May 2013 |

- Bug 20901 - Remove AbortMode and add AppendMode.

- Bug 21911 - Change MediaPlaybackQuality.creationTime to DOMHighResTimeStamp.

|

| 02 May 2013 |

- Reworked ambiguous text in a variety of places.

- Added Acknowledgements section.

|

| 30 April 2013 |

- Bug 21822 - Fix 'fire ... event ... at the X attribute' text.

- Bug 21819 & 21328 - Remove 'compressed' from coded frame definition.

|

| 24 April 2013 |

- Bug 21796 - Removed issue box from 'Append Error' algorithm.

- Bug 21703 - Changed appendWindowEnd to 'unrestricted double'.

- Bug 20760 - Adding MediaPlaybackQuality object.

- Bug 21536 - Specify the origin of media data appended.

|

| 08 April 2013 |

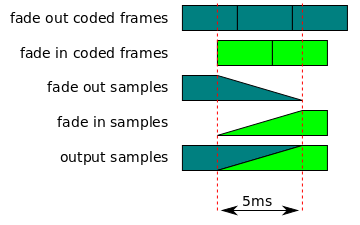

- Bug 21327 - Crossfade clarifications.

- Bug 21334 - Clarified seeking behavoir.

- Bug 21326 - Add a note stating some implementations may choose to add fades to/from silence.

- Bug 21375 - Clarified decode dependency removal.

- Bug 21376 - Replace 100ms limit with 2x last frame duration limit.

|

| 26 March 2013 |

- Bug 21301 - Change timeline references to "media timeline" links.

- Bug 19676 - Clarified "fade out coded frames" definition.

- Bug 21276 - Convert a few append error scenarios to endOfStream('decode') errors.

- Bug 21376 - Changed 'time' to 'decode time' to append sequence definition.

- Bug 21374 - Clarify the abort() behavior.

- Bug 21373 - Clarified incremental parsing text in segment parser loop.

- Bug 21364 - Remove redundant condition from remove overlapped frame step.

- Bug 21327 - Clarify what to do with a splice that starts with an audio frame with a duration less than 5ms.

- Update to ReSpec 3.1.48

|

| 12 March 2013 |

- Bug 21112 - Add appendWindowStart & appendWindowEnd attributes.

- Bug 19676 - Clarify overlapped frame definitions and splice logic.

- Bug 21172 - Added coded frame removal and eviction algorithms.

|

| 05 March 2013 |

- Bug 21170 - Remove 'stream aborted' step from stream append loop algorithm.

- Bug 21171 - Added informative note about when addSourceBuffer() might throw an QUOTA_EXCEEDED_ERR exception.

- Bug 20901 - Add support for 'continuation' and 'timestampOffset' abort modes.

- Bug 21159 - Rename appendArrayBuffer to appendBuffer() and add ArrayBufferView overload.

- Bug 21198 - Remove redundant 'closed' readyState checks.

|

| 25 February 2013 |

- Remove Source Buffer Model section since all the behavior is covered by the algorithms now.

- Bug 20899 - Remove media segments must start with a random access point requirement.

- Bug 21065 - Update example code to use updating attribute instead of old appending attribute.

|

| 19 February 2013 |

- Bug 19676, 20327 - Provide more detail for audio & video splicing.

- Bug 20900 - Remove complete access unit constraint.

- Bug 20948 - Setting timestampOffset in 'ended' triggers a transition to 'open'

- Bug 20952 - Added update event.

- Bug 20953 - Move end of append event firing out of segment parser loop.

- Bug 21034 - Add steps to fire addtrack and removetrack events.

|

| 05 February 2013 |

- Bug 19676 - Added a note clarifying that the internal timestamp representation doesn't have to be a double.

- Added steps to the coded frame processing algorithm to remove old frames when new ones overlap them.

- Fix isTypeSupported() return type.

- Bug 18933 - Clarify what top-level boxes to ignore for ISO-BMFF.

- Bug 18400 - Add a check to avoid creating huge hidden gaps when out-of-order appends occur w/o calling abort().

|

| 31 January 2013 |

- Make remove() asynchronous.

- Added steps to various algorithms to throw an INVALID_STATE_ERR exception when async appends or remove() are pending.

|

| 30 January 2013 |

- Remove early abort step on 0-byte appends so the same events fire as a normal append with bytes.

- Added definition for 'enough data to ensure uninterrupted playback'.

- Updated buffered ranges algorithm to properly compute the ranges for Philip's example.

|

| 15 January 2013 |

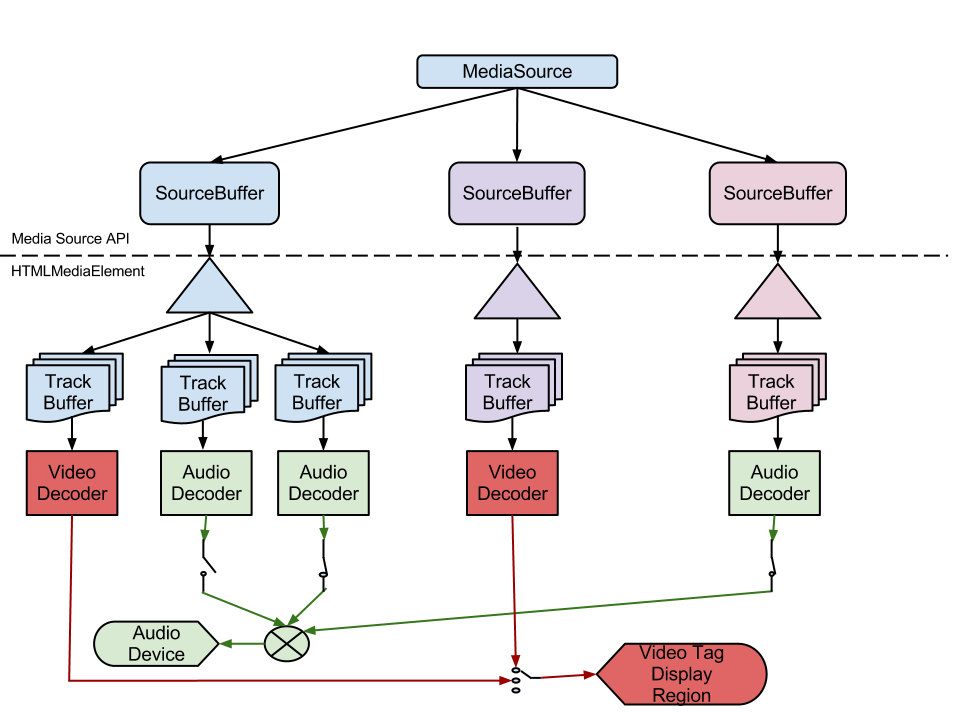

Replace setTrackInfo() and getSourceBuffer() with AudioTrack, VideoTrack, and TextTrack extensions. |

| 04 January 2013 |

- Renamed append() to appendArrayBuffer() and made appending asynchronous.

- Added SourceBuffer.appendStream().

- Added SourceBuffer.setTrackInfo() methods.

- Added issue boxes to relevant sections for outstanding bugs.

|

| 14 December 2012 |

Pubrules, Link Checker, and Markup Validation fixes.

|

| 13 December 2012 |

- Added MPEG-2 Transport Stream section.

- Added text to require abort() for out-of-order appends.

- Renamed "track buffer" to "decoder buffer".

- Redefined "track buffer" to mean the per-track buffers that hold the SourceBuffer media data.

- Editorial fixes.

|

| 08 December 2012 |

- Added MediaSource.getSourceBuffer() methods.

- Section 2 cleanup.

|

| 06 December 2012 |

- append() now throws a QUOTA_EXCEEDED_ERR when the SourceBuffer is full.

- Added unique ID generation text to Initialization Segment Received algorithm.

- Remove 2.x subsections that are already covered by algorithm text.

- Rework byte stream format text so it doesn't imply that the MediaSource implementation must support all formats supported by the

HTMLMediaElement.

|

| 28 November 2012 |

- Added transition to HAVE_METADATA when current playback position is removed.

- Added remove() calls to duration change algorithm.

- Added MediaSource.isTypeSupported() method.

- Remove initialization segments are optional text.

|

| 09 November 2012 |

Converted document to ReSpec. |

| 18 October 2012 |

Refactored SourceBuffer.append() & added SourceBuffer.remove(). |

| 8 October 2012 |

- Defined what HTMLMediaElement.seekable and HTMLMediaElement.buffered should return.

- Updated seeking algorithm to run inside Step 10 of the HTMLMediaElement seeking algorithm.

- Removed transition from "ended" to "open" in the seeking algorithm.

- Clarified all the event targets.

|

| 1 October 2012 |

Fixed various addsourcebuffer & removesourcebuffer bugs and allow append() in ended state. |

| 13 September 2012 |

Updated endOfStream() behavior to change based on the value of HTMLMediaElement.readyState. |

| 24 August 2012 |

- Added early abort on to duration change algorithm.

- Added createObjectURL() IDL & algorithm.

- Added Track ID & Track description definitions.

- Rewrote start overlap for audio frames text.

- Removed rendering silence requirement from section 2.5.

|

| 22 August 2012 |

- Clarified WebM byte stream requirements.

- Clarified SourceBuffer.buffered return value.

- Clarified addsourcebuffer & removesourcebuffer event targets.

- Clarified when media source attaches to the HTMLMediaElement.

- Introduced duration change algorithm and update relevant algorithms to use it.

|

| 17 August 2012 |

Minor editorial fixes. |

| 09 August 2012 |

Change presentation start time to always be 0 instead of using format specific rules about the first media segment appended. |

| 30 July 2012 |

Added SourceBuffer.timestampOffset and MediaSource.duration. |

| 17 July 2012 |

Replaced SourceBufferList.remove() with MediaSource.removeSourceBuffer(). |

| 02 July 2012 |

Converted to the object-oriented API |

| 26 June 2012 |

Converted to Editor's draft. |

| 0.5 |

Minor updates before proposing to W3C HTML-WG. |

| 0.4 |

Major revision. Adding source IDs, defining buffer model, and clarifying byte stream formats. |

| 0.3 |

Minor text updates. |

| 0.2 |

Updates to reflect initial WebKit implementation. |

| 0.1 |

Initial Proposal |